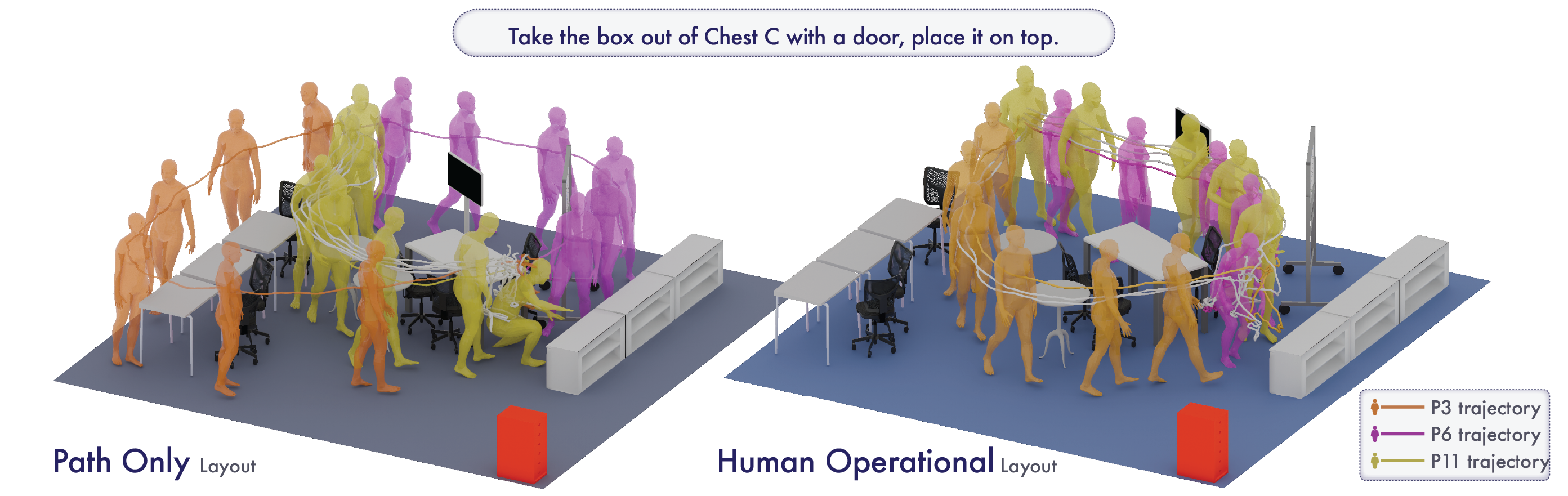

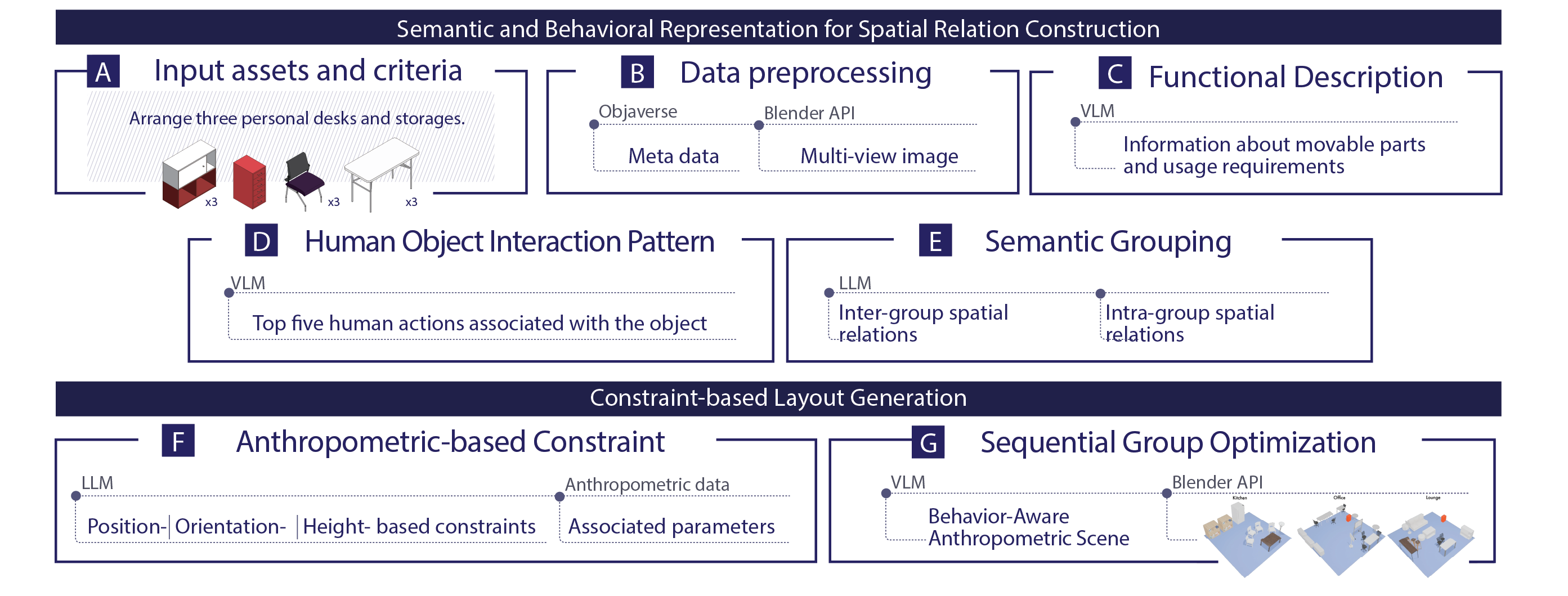

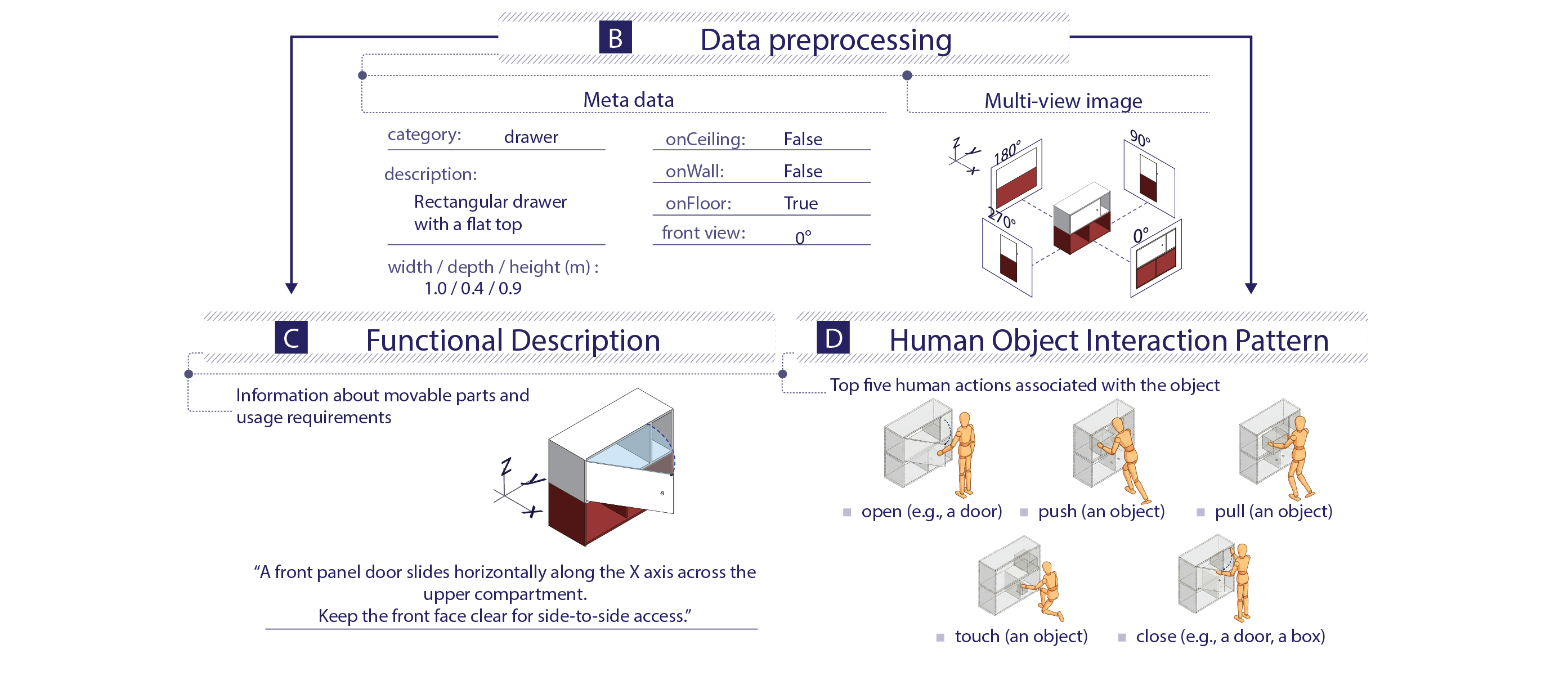

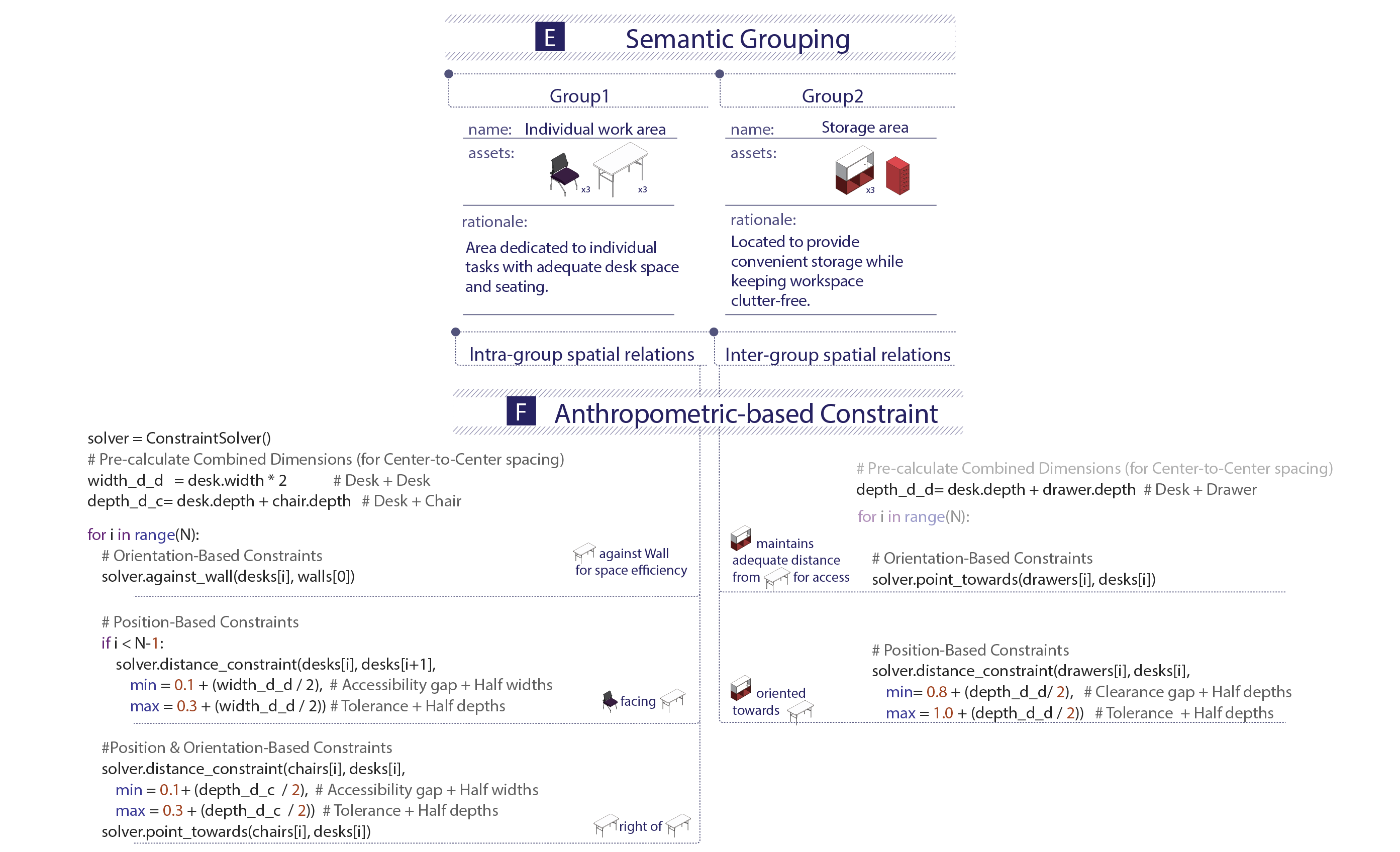

Well-designed indoor scenes should prioritize how people can act within a space rather than merely what objects to place. However, existing 3D scene generation methods emphasize visual and semantic plausibility, while insufficiently addressing whether people can comfortably walk, sit, or manipulate objects. We present a Behavior-Aware Anthropometric Scene Generation framework that leverages vision–language models to analyze object–behavior relationships and translates spatial requirements into parametric layout constraints adapted to user-specific anthropometric data.

@inproceedings{jin2026behavioraware,

title = {Behavior-Aware Anthropometric Scene Generation for Human-Usable 3D Layouts},

year = {2026}

}